Production Agent-RAG Architectures

Introduction

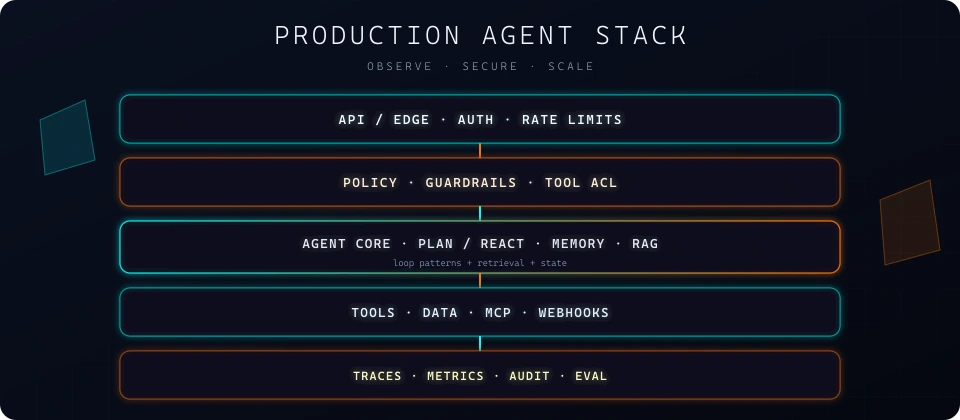

AI Agent Loop Patterns name the cycles (think–act–observe and extensions). This page focuses on one production stack: an enterprise knowledge copilot — how you combine RAG, optional Re-Act, memory, and tools for grounded answers from approved sources.

The cards below have a SOLUTION / STACK line where applicable, plus a deep dive on ingest, hybrid retrieval, rerank, augment, generate, and optional agent steps. For sense, think, act, observe, and finish, see Anatomy of an AI agent; for retrieval pipelines, Anatomy of RAG.

Enterprise knowledge copilot

The client wants employees, partners, and customers to get fast, consistent answers from policies, handbooks, and product information—without every repeated question being routed to subject-matter experts. The product is an internal knowledge assistant: based on approved sources, aligned with access rights by role or tenant, and able to show where an answer comes from—not a generic chatbot that invents or pulls from the public web.

Answers should draw on the private corpus, but the conversation should feel like a competent colleague: when the situation matches how the organization usually documents or escalates work, the assistant may, where appropriate, ask or suggest opening a ticket in the system so technical staff can resolve the case—but only after unambiguous confirmation from the user. When a reply hinges on totals, proration, or unit conversion from numbers that appear in retrieved, approved material, a small calculator tool can apply exact arithmetic instead of leaving that to the model. Context from earlier in the conversation stays available so a clarifying reply does not require restating the whole story.

SOLUTION: AGENTIC RAG: RAG + RE-ACT(MULTI + SPEAK + PLANNING + REFLECTION) + MEMORY

Typical tools: vector search / file fetch, optional calculator or ticket creation with approval.

Patterns: ground with RAG before acting; keep memory scoped to session or tenant so facts do not leak across users.

When it fits: internal Q&A, policy lookup, onboarding assistants. Add multi-action only when lookups are independent (e.g. two knowledge bases).

Additional Ideas:

- Exponential backoff for retries

- Caching Strategies for performance

- FAISS is a compute library. The usual pattern: vectors stay in memory for queries; you can keep an optional index file on disk for reload; payloads are usually stored outside FAISS.

- IndexedDB is the browser’s embedded database (async, structured storage in the user profile). Cache retrieval results, embedding tables, or large payloads there so repeat queries skip network and you keep only a hot slice in RAM—faster revisits and less load on your API when latency matters.

- Composition and decomposition of an agentic system into smaller pieces.

- Sharding or partitioning the database to speed up retrieval

- Some system components can run concurrently or in parallel

- Real-time; streaming

Client Request: Enterprise Knowledge Assistance

Stakeholders need a single assistant that answers operational and policy questions with one consistent story across handbooks, forms, product catalogs, and ticket or case history — with clear ownership of where each fact came from and no mixing of customer or contract boundaries. Answers must stay current when sources change (revoked policies, superseded SKUs, amended SLAs) and must support audit-style explanations ("why is this allowed?", "what changed since last quarter?", "which obligation applies here?") without escalating every question to SMEs. Volume and wording of questions vary wildly; latency and spend still need predictable caps so teams can roll this out broadly, not only to VIP users.

Conclusion

Pick the smallest stack that meets the use case: add planning, memory, or reflection when accuracy or horizon demands it — each layer adds latency and ways to fail. Ground with RAG when facts live outside the model; keep tool surfaces narrow for high-risk flows. More on risks and controls: Prompt injection, RAG Design Patterns (Chunking, Retrieval, Ranking), AI Agent Loop Patterns.