Anatomy of RAG

Introduction

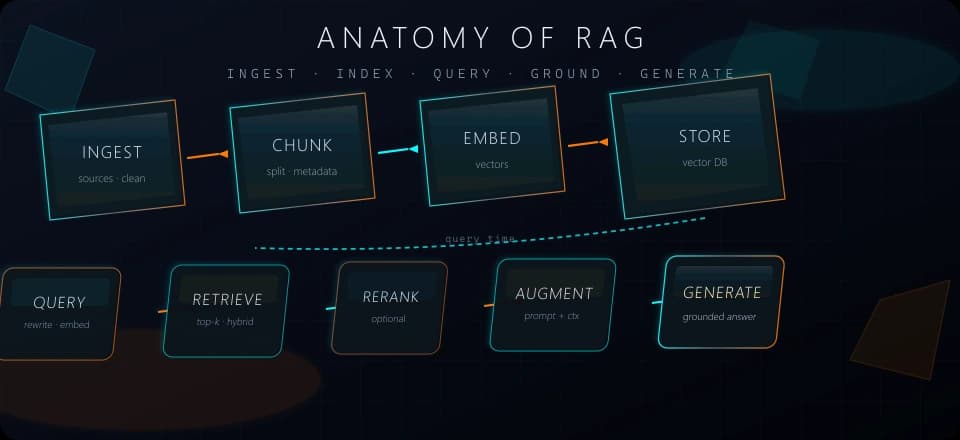

Retrieval-augmented generation (RAG) couples a retriever to an LLM: you index your sources, pull relevant segments at query time, and inject them into the prompt so answers stay grounded in your data. This page names the usual end-to-end pipeline for typical vector RAG and the core components teams wire together.

End-to-end pipeline (typical vector RAG)

Most production vector RAG flows follow the same nine stages:

Ingest — load sources (PDFs, HTML, tickets, DB exports). Extract content, clean, and sanitize.

You decide what is in scope for search: headers, footers, and boilerplate often hurt retrieval if left in. Pipelines may run on a schedule or on every document change so the index does not drift silently from the source of truth.

Chunk — split into segments;

Fixed-size — N tokens/chars per window; fastest, cuts can ignore structure.

Context-aware — split on outline/layout when you have it; default for handbooks and wikis.

Overlap — repeat a border slice between consecutive chunks; better recall at cuts; larger index.

Parent/child — retrieve small, expand to parent for prompting.

Late chunking — document-level encoding before chunk vectors; needs long-context embedders.

Contextual retrieval — LLM situates each chunk vs the whole doc before embed; cost at ingest, stronger retrieval on critical corpora.Tradeoff — tiny chunks miss surrounding meaning; huge chunks mix topics and weaken similarity—overlap and hierarchy mitigate boundary issues.

Embed — turn each chunk into a vector with an embedding model.

The model maps text into a space where “near” means semantically similar under its training. You batch embeddings for throughput and version them with the index so you can re-embed when you upgrade models.

Storage — store vectors + ids + payload in a vector DB or search engine.

Payload holds the chunk text and metadata for filtering and display at read time. The store must support delete/update when documents change, not only append, or stale vectors will leak into answers.

Query — user question → optional query rewrite / expansion → query embedding (vectorize with the same model and similarity metric: cosine, dot, L2, etc.).

The query embedding must use the same model and distance definition as the corpus, or rankings are meaningless. Rewrites and multi-query strategies cost extra latency but recover recall when user phrasing does not match document phrasing.

Retrieve — similarity search (often top-k; optional metadata filters, hybrid BM25 + vector) or hybrid search.

Top-k is a cap, not a guarantee of relevance: you may widen k and then compress or rerank. Hybrid search helps when users type product names, IDs, or jargon that embeddings alone would miss.

Optional rerank — cross-encoder or LLM scores the shortlist for precision.

First-stage retrieval favors recall; reranking spends more compute on a short list to order by true usefulness. Skipping rerank is valid for prototypes; production systems often add it once traffic and quality bar grow.

Augment — build prompt: system rules + retrieved chunks + user question.

You define how citations are shown, how much context fits in the window, and whether to deduplicate overlapping chunks. Clear structure here reduces the model inventing facts that are not in the passages.

Generate — LLM answers using that context only (grounding).

Sampling temperature and tool settings change how tightly the model sticks to evidence versus paraphrases it. Logging prompts and retrieved ids makes it possible to audit failures when an answer diverges from your sources.

Core components

| Component | Role |

|---|---|

| Data layer | Raw documents + chunk metadata (tenant, doc_id, section, ACLs). |

| Chunking strategy | Fixed, semantic, layout-aware, hierarchical — see patterns in RAG architectures. |

| Embedding model | Must match at vectorize/insert and query time; same dimension and training domain when possible. |

| Storage | Vector DB, graph DB, hybrid stack, or in-memory (server or browser). |

| Retriever | Returns candidate chunks; may be multi-stage (coarse then fine). |

| Generator | LLM that consumes the augmented prompt; temperature and tools affect faithfulness. |

Conclusion

The skeleton is stable even when vendors differ: index content, search by similarity (often with hybrid or reranking), then force the model to answer from retrieved context. Where systems diverge is chunking, embedding choice, and how aggressively you filter and rerank before generation. For concrete retrieval patterns and stacks, see RAG architectures.